Predict Heart Disease playground-series-s6e2

#Kaggle Playground Series - Predict Heart Disease

Welcome to the Kaggle playground series, season 6 of episode 2. This is a predict her disease and actually my first Kaggle competitions. I did have a time back when I submitted a notebook to the Kaggle, however, none of them were actually serious. So this is my first time doing a solo Kaggle by myself. As a disclaimer, this is the write-up written by me, not by AI, LLM model.

#Motivation

I always have an interest to machine learning and making a prediction based on math and statistics from the huge data that we have. Especially when it comes to a medical, like predicting a heart disease, it becomes very interesting. I see machine learning in the medical field to do more deeper research into a human biosystem.

For example, in this playground, you get to understand what variable has more correlation into a human heart disease. From the data analysis, we get to understand the age and cholesterol and sex my model. This was very interesting.

#Problem statement

The program statement in this project was to predict the heart disease based on the given data. However, the trick here is that the data we are given was generated from deep learning model, assuming some of the LLM model that are trained on the heart disease prediction data sets.

The dataset for this competition (both train and test) was generated from a deep learning model trained on the Heart disease prediction dataset.

Predicting Heart Disease

Playground Series - Season 6 Episode 2

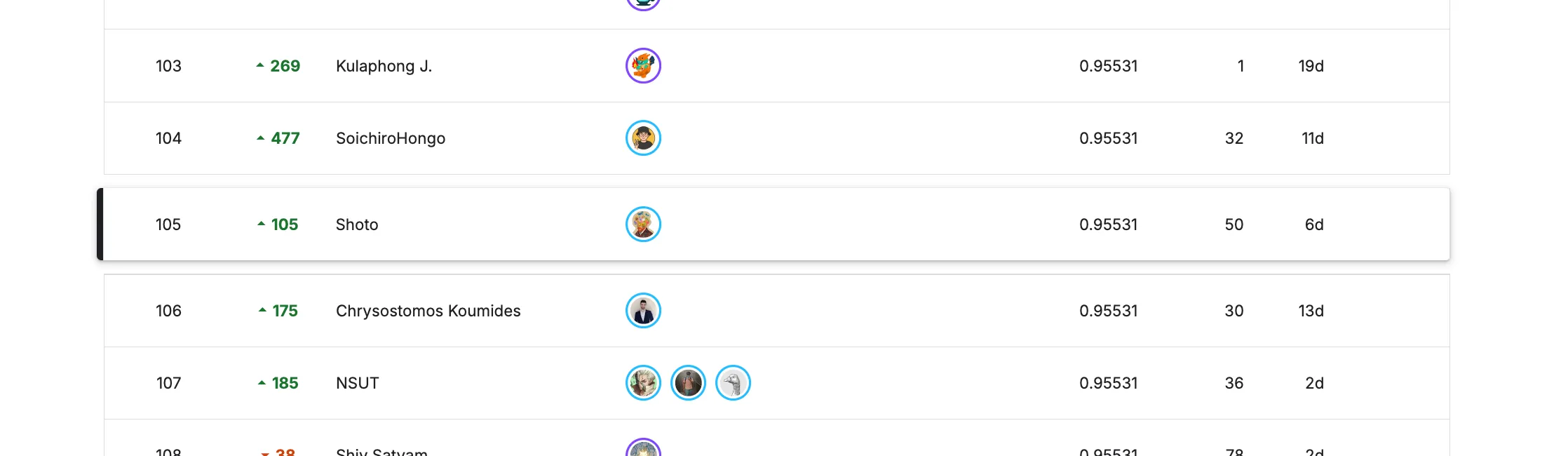

We need to be careful on overfitting. We shouldn't overfit a data that are probably not true, and we also need to be aware that some of the data might be very hallucinated, and we call this as data flipping. During the competition, a lot of the people got into 0.955 ceiling. A lot of people could not beat this score.

This was basically because of the Bayesian error over the datasets. This is a theoretical limit of the predictory where the feature is simply do not contain enough information to predict the separate classes. Original dataset would contain the 70% of the flipped data, and the training dataset would contain the 10% of flipped data. There was a whole discussion of how the flipped data were generated and how we can train the model to avoid Bayesian error rates.

Predicting Heart Disease

Playground Series - Season 6 Episode 2

A key reason is label noise that is not random. There are samples where the clinical features strongly indicate “healthy,” yet the label is “disease,” and vice versa. This isn’t simple annotation error; it looks like conditional noise tied to specific feature patterns.

I think this is our problem statement for this kaggle, how to go beyond Bayesian theoretical error?

#EDA

The dataset is large and clean, train has 630,000 rows with 15 columns, test has 270,000 rows with 14 columns, and the only missing column in test is the target Heart Disease. The id column has no duplicates, and there is zero overlap between train and test ids. The target is moderately imbalanced but not extreme: Absence is 347,546 (55.166%) and Presence is 282,454 (44.834%).

Missing values are effectively nonexistent, but there are meaningful zero‑rate patterns: several binary indicators are mostly zero, FBS over 120, Exercise angina, Number of vessels fluro, and ST depression has a high zero rate as well. These zeros likely represent clinically normal cases and create a strong contrast against positive cases.

Feature relationships are consistent and intuitive! Presence tends to show higher Age, higher ST depression, more vessels fluro, and higher Thallium, while Max HR is lower on average. BP shows little separation. On the categorical side, the target rate shifts sharply for Sex, Slope of ST, Vessels, Thallium, and Exercise angina. Finally, simple train vs test drift checks (KS/PSI for numeric and total variation for categorical) show extremely small differences, so train/test distributions are effectively aligned.

- Train/test: (630000, 15) vs (270000, 14); only Heart Disease is missing in test, no duplicate ids, no overlap.

- Target balance: Absence 55.166%, Presence 44.834%.

- No missing values; high zero rates in FBS over 120, Exercise angina, Number of vessels fluro, ST depression.

- Strong signals: Presence ↑ Age, ST depression, Vessels, Thallium; Presence ↓ Max HR; BP ~ flat.

- Minimal train/test drift by KS/PSI and categorical TV distance.

#Ensemle

For ensembling, I started with two strong but highly correlated baselines: CatBoost and RealMLP. Their OOF AUCs are already near the ceiling as CatBoost 0.955611 and RealMLP 0.955689. I also used seed bagging to stabilize the estimates and reduce variance.

A simple probability mean blend offered only a tiny gain! The mean‑prob ensemble reached 0.955723 OOF AUC, and a weighted 0.80/0.20 blend reached 0.955673. On the public leaderboard, the prior CatBoost‑centered ensemble landed around 0.95351. The marginal improvement is expected: these models make very similar errors, and CV performance is already constrained by the “~0.955 ceiling” from label noise and split luck.

Next steps are focused on diversity rather than more of the same model: stacking with a logistic regression meta‑model over OOF predictions, conditional label‑noise modeling and rank‑based adjustment, and wider seed bagging to further stabilize output. These are aimed at improving ranking rather than raw probabilities, which matters most for AUC.

- OOF AUC: CatBoost 0.955611, RealMLP 0.955689.

- Simple blend: mean‑prob 0.955723, weighted 0.80/0.20 0.955673.

- Public LB (prior CatBoost ensemble): 0.95351.

- Why gains are small: high model correlation + CV split luck near the 0.955 ceiling.

- Planned: stacking over OOFs, conditional noise modeling, and expanded seed bagging.

#Result

#Top LB writeup

Diversity, Selection, and Trusting the CV–LB Relation

His idea is to create multiple slightly different models, select an effective combination of them, and then combine their outputs using a simple linear model. This approach works very well. In this case, the person tried to do as much feature engineering and model training as possible. From this, I think that binning, digit features, and frequency encoding are likely the main feature engineering techniques we need to apply in this competition.

Additionally, the person used Gradient Boosting, LightGBM, CatBoost, and a real MLP model, which are all approaches that we discussed in the discussion post.

You do not always need one dominant “magic” model.

To me, this was very insightful. In the key takeaways, he mentioned several points, including that we do not necessarily need one huge model to win the competition. Instead, it is more important to conduct extensive research, understand the data, and experiment with different approaches and training models to determine which methods are most effective.

1st Place Solution — Diversity, Selection, and Trusting the CV–LB Relation | Kaggle

Discover what actually works in AI. Join millions of builders, researchers, and labs evaluating agents, models, and frontier technology through crowdsourced benchmarks, competitions, and hackathons.

Avoid leaks and overfitting

He talked that data leakage was the biggest risk in this competition, so he wanted to avoid using a technique called target encoding and using a great cross-validation technique in this so that he can ensure the validating data was never used in the creating a feature. Success came from avoiding leakage, preventing overfitting, and combining multiple diverse models with stacking, rather than building one extremely complex model.