Gemini 3 Hackathon Tokyo

#Gemini 3 Hackathon Tokyo

This time, I participated in the Gemini3 Hackathon Tokyo, sponsored by Google DeepMind and Supercell in Tokyo. In this report, I would like to share my experiences at the hackathon and provide an overview of the project I created. This article was written by a human, not AI.

#Motivation behind this hackathon

When I first decided to join this hackathon, I was thinking of casually building some kind of AI project using Gemini 3 or perhaps a voice model. At the time, I was participating in a Kaggle Playground competition and was particularly interested in data enfeebling and model development, so I was leaning toward building something more ML-focused.

However, once I arrived at the venue, I realized that because Supercell was one of the sponsors, we were expected to build a game—or at least a tool that would contribute to game development. I hadn’t known this beforehand, so that was a bit of a setback for me.

Another big motivation was that I heard Shen Gu, a research engineer developing Gemini at Google’s U.S. headquarters, might attend. I really wanted to meet him. He represents this AGI era in many ways, and many of his published papers—some of which laid the foundation for Gemini’s reasoning processes—are highly cited across the machine learning field. Although he wasn’t originally born in Japan, he has shown interest in the Japanese language and culture, which I believe is why he came to serve as a judge at this event.

The hackathon began with his presentation. Looking back at history—from statistical and mathematical approaches like deep learning, to systems like AlphaGo that were embedded into robotics and exceeded human cognitive abilities—we can see how AI has evolved. Today, we are in an era of AI agents. One of the graphs he showed illustrated autonomy and embodiment, which made the future feel incredibly exciting.

#Happy hacking

After presentations from Justin at Supercell and Shane from Google DeepMind, the coding session began. The atmosphere was incredibly energetic. Most participants were from countries such as the United States, Canada, Australia, and Cambodia, so English was spoken frequently alongside Japanese.

It truly felt like an international gathering. Seeing the judges conversing in English also reinforced the sense that this was a global event.

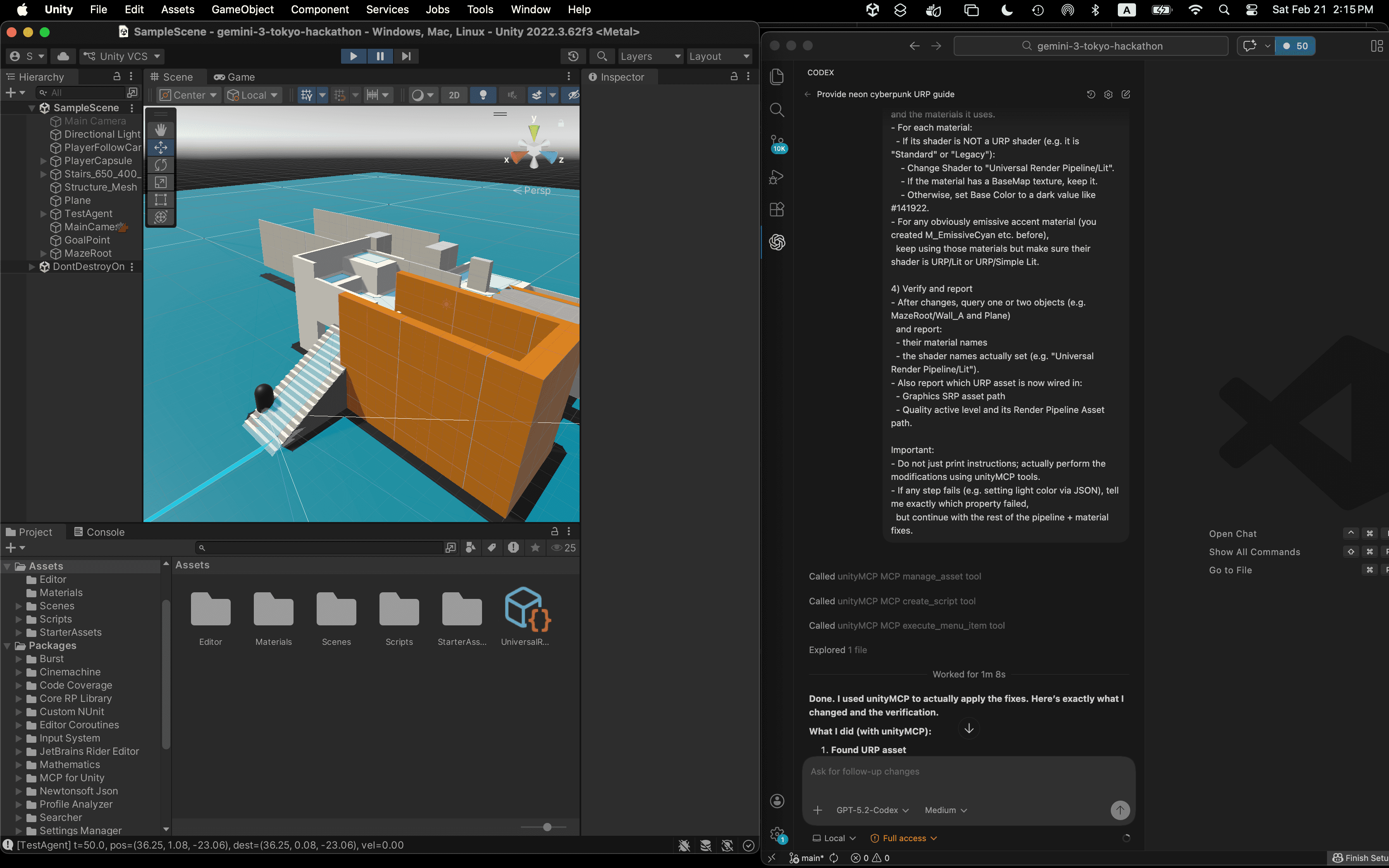

#My Project: E2E Unity Simulator

The project I worked on was called the E2E Unity Simulator.In recent years, games such as Mario Kart and The Legend of Zelda have evolved into open-world formats. Even Pokémon, which previously wasn’t considered open-world, has shifted in that direction. It’s easy to imagine that the scope of testing required now far exceeds what developers can manually cover.

As a developer, I wanted to help game creators use AI to detect bugs and improve game quality in areas that human testers might not realistically explore.

Since development started at 10 a.m., and it was my first time ever using Unity, I spent a significant amount of time just installing it. Then I had to create my first project, download assets, and assemble them into a coherent environment. That alone probably took three to four hours, which was a major time cost during the hackathon.

One thing that made me very happy was that a friend I met at the venue, Rowan, introduced me to MCP for Unity. By using MCP to send instructions to Unity from Codex, I was able to compensate for my lack of Unity knowledge. It noticeably improved my workflow efficiency.

Eventually, I managed to build a simple hide-and-seek–style game in Unity. From there, I attempted to have Gemini 3 navigate the map and generate bug reports.

One major challenge arose when Gemini entered buildings. Once inside, it often lost track of its location, which made evaluation difficult. The map contained three treasure chests as the user’s objective. However, if Gemini entered a dark building before completing the task, it would become confused. Even with Gemini’s image recognition capabilities, I felt that this scenario was difficult.

Source: media.gamestop.com

One improvement I wanted to implement was feeding Gemini images at five-second intervals. By providing a sequence of images rather than just a single frame, I believe Gemini could better understand its current position and how it arrived there. With only one image, it may have been too difficult for the model to infer context.

I also thought about incorporating sound to help the agent better understand its surroundings. In The Legend of Zelda, for example, players perceive their location not only visually but also through audio. The soundscape changes at mountaintops, in deserts with sandstorms, or near NPCs where you can hear voices or horses’ hooves. Audio provides rich contextual information.

#Other Hackers

Ping Pong Game

This game was created by Ilia, who was sitting next to me.

The idea was to utilize the idle time developers experience when using cloud code or Codex. During implementation waits, players could play a simple ping pong game. While playing, they could monitor the current development status. The system even adjusted HP based on the difficulty of implementation tasks. I thought it was a clever and entertaining concept.

Food Fight Robot

This project was created by participants at the table next to mine.

The idea was a “Food Fight Robot” game. Participants uploaded food photos from their phones, which were transformed into food-based robots that battled one another. The concept was incredibly creative.

#Where to ahead

I learned many lessons from this hackathon.

One key takeaway is that winning a hackathon doesn’t necessarily mean solving a serious problem or building something that directly helps people. Instead, it’s about creating something judges find interesting—something sponsors see potential in, or something that sparks further discussion.

Whether you build developer tools or consumer-facing apps doesn’t matter as much as how compelling your concept is to the judges.

At the same time, I realized there are limits to integrating voice AI or LLMs into games and applications. For simple effects or messages, using a full LLM may not be necessary. Sometimes, pre-built systems with lower latency are better for improving user experience.

Beyond the technical lessons, I made many new friends and had the chance to speak with respected judges and researchers I had long wanted to meet. I’m very grateful to Google DeepMind and Supercell for hosting this event, and I hope to participate in the next hackathon as well.